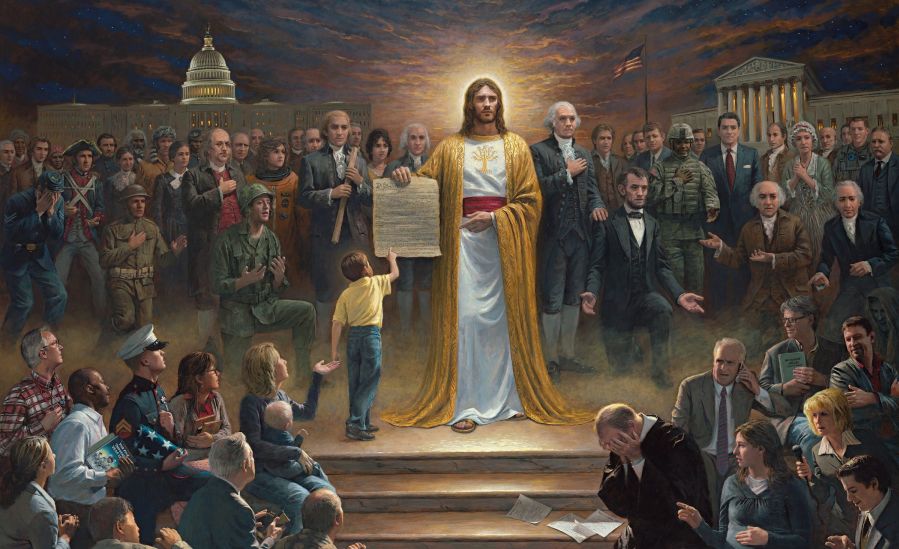

Are We a Christian Nation?

The debate is about as old as this country itself, and you need not travel far to find supporters for both sides of the argument. Many people insist that although Christianity remains the dominant religion in the United States, we are not, by definition, a "Christian country". They point to the Constitution, which is devoid of references to God and does not advocate for any particular religion over another.

However, there are those who disagree. They contend that our country was founded on Christian ideals, therefore it must be Christian. Their arguments frequently cite implicit references to religion found in the Constitution, such as the phrase "in the Year of our Lord," which appears near the end of the document.

For obvious reasons, this conversation usually ends in a stalemate. So, let's throw a wrench into it: were the Founding Fathers actually Christians?

The Faith of the Founders

The Founders were all religious people that fact is widely acknowledged. What is often disputed, however, is (1) the nature of their faith, and (2) whether they thought America ought to be based on Christian beliefs.

On the first point, certain historians insist that the Founders were not actually Christians at least not in the traditional sense. According to this theory, their faith was better characterized as Deism a belief in a divine creator of the earth, but one that has since relinquished contact with the human world. The Founders were people of faith with strong Christian values, the thinking goes, but may not have identified with Christianity as we commonly understand it.

Of course, this is just a theory. Other experts will argue that the Founders were devout Christians. However, if they were in fact Deists, then it becomes quite difficult to argue that they intended to create a Christian country.

Christian Influence Today

Christian Influence Today

While the Constitution may not explicitly say so, there is a lot of evidence that we do live in a Christian country. Think about all the elements of Christianity we encounter on a daily basis. The phrase "In God We Trust" appears on all our coins and paper money, evoking questions about the supposed separation of church and state. In 1954 Congress voted to add the words "under God" to the United States Pledge of Allegiance, which is recited by children in schools across America every day. Not all school districts require the pledge, but many do.

In the realm of education, Christian beliefs have long been present. Over the years, there have been countless attempts to incorporate the Bible into student curriculum. For example, many schools teach creationism as an alternative to evolution. But it doesn't stop there youth sex education courses often invoke conservative religious principles and advocate abstinence-only approaches.

In a recent Pew Research Center study, 32 percent of respondents said people should be Christian to be considered true Americans.

Why Not Endorse Christianity?

You may have heard the common refrain: "the vast majority of Americans have always been Christians, so why shouldn't we be a Christian country?"

Although Christianity is the dominant religion in the U.S., making it the official religion could have serious consequences consequences that the Founders understood quite well. After all, many colonists came to the New World to flee religious persecution in Europe. If the government were to endorse a specific religion, laws promoting that religion (and suppressing others) might follow.

In an 1814 letter, Thomas Jefferson famously wrote that "Christianity neither is, nor ever was a part of the common law."

Takeaways

The debate rages on. These days, the argument for protecting Christian beliefs through law is often couched in the importance of religious liberty. Indeed, the free expression of faith is universally supported in this country. However, that support can quickly erode when the expression of a religious belief intentionally violates the rights of others.

What do you think? Is America an "unofficial" Christian country? What does the future hold?

90 comments

-

First off I was raised Jewish and growing up always felt I was in a Christian country, almost everything was based on Christian beliefs, legal holidays, prayers, the pledge of allegiance and so on. Now years later it is all a mish mosh of celebrated holidays, prayer is basically non-existent, and my kids don't even know the pledge of allegiance.

I am now a practicing Christian and being so I think time past was a better time to be of the Christian faith in this country of ours. The liberal left has gotten out of hand and has really forsaken the Christians of this country with their godlessness or every god way of thinking. The worst thing is I was once one of them.

This country was founded on a belief that all should be able to practice what they believe, but this has been at the cost of those of the Christian faith here in the USA

-

Well said. Because of the liberal left brain washed and dumb down I am a firm believer of Christ and America is Not about practicing evil Lucifer traits.

-

DI NIT BRING POLITICS INTO THIS. I am a Democrat and I have disdain for Rethuglicans. They are destroying this country on a daily basis as of now. The Fascist in the OVAL office is destructive to this NATION. Trying to block the 1st amendment rights if Americans. Granting gun right to PSYCHIATRIC released patients and wife beaters. If you support these things then YOU ARE NOT A MINISTER. You are a FALSE PROPHET.

-

"Rethuglicans"? Name calling doesn't prove your point, it proves that there's no substance behind your point. If you can't carry on a civil discussion, it's time to remain silent. Remember the wise words of Benjamin Franklin: "It's better to remain silent and be thought a fool that to open your mouth and remove all doubt." You, sir, have removed all doubt.

-

Does the LIBERAL baby need his bottle and a safe space? LMAO! I can't wait for Trump to DEPORT you!

-

Very refreshing and insightful comment. Pithy and on point. I don't know that many could state it in the way you did AND do it in less than 140 characters. Really advanced the course of the dialogue. How could anyone possibly refute you? Glad you were able to share, but, please, next time don't hold back...say what you really think. There definitely is a world shortage of thinkers like youself. Looking forward to your next entry.

-

You must have been deeply hurt. I'm sorry that happened to you, and I wish you rapid and thorough healing.

Even though you come to this forum and use language of contention, I'm glad you're here. I hope you take the opportunity to begin healing.

Be well.

-

-

While I agree with much of your comment, I question whether name calling is necessary. I'm neither democrat nor republican as both parties are corporatist, however find democratic policy to be more palatable from a humanitarian point of view.

-

-

I had to smile when I read your "Lucifer" comment. The name Lucifer appears only once (Isaiah 14:12) in the antiquated KJV1611 and a shoddy translation of the Latin Vulgate, which uses a lower case "lucifer", so in context, it isn't even a proper noun. Also, "lucifer" is later translated to "morning star" or "shining one", in more recent translations. "Lucifer" is not the Devil, it was a reference to Nebuchadnezzar II, king of Babylon.

-

-

"This country was founded on a belief that all should be able to practice what they believe, but this has been at the cost of those of the Christian faith here in the USA"

What cost? As far as I can tell, all are able to practice whatever their beliefs may be ... in private, in their homes, in their places of worship. And that's where it should end, IMHO.

As for the mish-mash of holidays, where we went wrong was trying to accommodate non-Christian faiths by creating additional religious holidays. If we had removed all faith-based "holidays" from our secular institutions we would have avoided the mish-mash and the conflicts. And it only wold have hurt for a little while.

-

Some excellent points, thanks

-

Last I read the Constitution states "Congress shall make no law respecting an establishment of religion, or prohibition the free exercises thereof..." . It does not say " as long as the people exorcise their religion in private, in their homes or in their places of worship only or wherever permitted by Stephen Wehrenberg. So Stephen, the moment you begin telling Christians where and how they can practice their faith you have become an un American Tyrant.

Of the two founding documents the Declaration of Independence mentions the Creator several times including acknowledging that our rights flow from God and it is He, not men, who have endowed us with the unalienable rights of Life, Liberty and the pursuit of Happiness.

The object of the Constitution was to establish a secular state and therefore has no mention of God, the Creator or Provenance and the very concept of the secular state is formulated from the Protestant Reformation which philosophically separated the church from the state. That in no way indicates the Founders were not mostly Christians.

Coming from Philadelphia and growing up in Center City I played where the Founders walked. I sat in Christ's Episcopal Church in the pews labeled "Washington Family" and "Adams Family" and played on the grave of Benjamin Franklin and his wife in the Phila. Quaker Cemetery. And as person posting this pointed out Jefferson stated “Christianity neither is, nor ever was a part of the common law.” which is the essence of a secular legal system. You also should understand the First Amendment applies to "Congress" and therefore to the federal government only, not the states. In fact Pennsylvania was "The Quaker State", I believe Maryland was officially Roman Catholic and it goes on.

America was never founded as nor meant to be a Christian nation if by that you mean to have a specific law requiring citizens to be Christian. And though the Founders were not all Christians, I think there were a few Jews in there and some atheists and as mentioned deists, there were no founders who were adherents of religions not associated with Western Civilization and Western Culture. So while America was not exclusively Christian it was set up profoundly Western in Culture.

-

Congress established religion the moment they added "In God we trust" and "Under God". Also, the fact that many government agencies are closed on "Christian" holidays seems like an endorsement of a particular religion to me.

-

congress didn't establish religion. they caved to McCarthyism and embraced fascism in order to be further differentiated from the Soviets. History is your friend. Ignorance not so much...

-

Think you're confused. Exactly what religion is 'God'?

-

Neither our money, nor the Pledge of Allegiance are founding documents, there for those phrases do not constitute an establishment of religion.

-

-

-

Creating a mutual respect and clear lines between faith and state? I like the idea to be honest. Religious convictions are best kept to personal and private devices. Home, family, church and groups geared specifically for such things just seems to be far more evenly respectful to all. Unless and until someone tries to force someone else to be any certain way, just leave it be.

-

Thanks for stating the issue so succinctly. You're absolutely correct. Political discourse is about the state, which is not permitted involvement in religion. Faith is holding a belief in that which cannot be proven...that's what the phrase 'have faith in...' conveys.

People may have faith in their version of the bible or other texts they hold to be sacred, but that doesn't make it a fact. And certainly not an objective truth.

Americans should revel in their freedom to practice their faith in whatever manner we choose, but they are impinging on my freedom when then push for changes in the laws. Seeking interpretation of the law in our court is proper, but don't ask for changes in laws to take other's freedoms.

-

-

HEAR, HEAR‼️

-

-

That a group holding an unfair advantage over others pays the cost of an expansion of justice, is not a problem. It's just the balancing of the scales.

-

-

Hi guys, I am not American, but wasn't America founded by many members of the Masonic Order, who worshiped the Great Architect of the Universe? Given that this is just another name for God, and God isn't a Christian, I think that would allow anyone to honour any God as long as they followed commandments along the lines of 'don't harm anyone else for any reason' (Jesus, not OT). Just so you know that I am not just taking a swipe at America - Australia is an incredibly multicultural country now. Our founding fathers were a bunch of explorers, soldiers, murderers and thieves .. genocide was the name of the game. If you were Aboriginal you weren't classed as human, or as advanced as other societies, so therefore it was just fine to exterminate your tribe. That's very biblical, and since some of those individuals did think they were Christian, it appears they took their lead from from Deuteronomy 7:1-5, where God commanded the Israelites to kill anyone who got in the way of their invasion of the 'promised land'. And no, I am not Aboriginal either .. I just don't think that Christians are any different from every other 'religion' .. or set of beliefs ... on this planet, and so have no right to say 'this is only a Christian country' when our countries are already inhabited by people of so many other faiths.

-

Amendment I of our Constitution, in the "Establishment clause" is very clear on this issue. We are not a "Christian" nation nor an Islamic one, nor should our nation ever be identified with any organized religion or with none at all. The only "religious" appellation perhaps would be at all appropriate would be "Humanist" but I feel that that would not be allowable under the 1st Amendment.

-

Short answer: no. As for the future, I hope it brings what the present was supposed to be -- a nation where all can practice their religion while respecting that of others. Where you can hold just about any belief about your imaginary friends, as long as you don't insist that others share those beliefs, or change their behavior to conform with the proscriptions of your faith. I hope. But I have doubts these days.

-

From the very beginning, from the First People to the explorers thereafter, whose religious worship and spiritual beliefs were many and varied, this continent has always been, and always be a melting pot of religious/spiritual practices. So, no, this land has never, and never will be a christianism country.

-

We can have this conversation because of this U.S.-born and headquartered organization, one where I, a pagan priest, may be legally ordained to be there for those that need such services. This could not happen in an exclusively Christian country, and as an American I'm personally thankful for such freedom. I'm sure many of my countrymen here associated with the ULC feel similarly. Many want the U.S. to be a Christian-only or Christian-legislated country in some form or another, and arguments invariably point back to certain historical founders' possible intentions, but could they have envisioned the world before us now? Its on us, today, to decide what we will be and today many of us seem to want an inclusive rather than an exclusive law regarding such freedom.

Culturally this country's dominant religion is and has been Christianity, and even the language of the ULC itself is proof of that ("Universal Life Church", "minister", etc.). Its in keeping with what is called a 'missionary religion' (religions that seek to save the world.) Today that is a point of contention; how to save the world? Convert all? With guidance? With rule of law? Fewer [religious- or belief-minority] voices ask the question the rest of us may want to know; how to deal with the proselytism that seems so important to the core beliefs of the majority view? Like often answers like, I feel, and peaceful tolerance is often answered with peaceful tolerance, or if you like, the Golden Rule of Matthew 7:12; Do unto others as you would have done unto you.

What do you think?

-

This country has always, on paper, been about having freedom of religion. That means the freedom to worship (or not) as you choose as long as it doesn't impinge upon the rights of others. Regardless if someone worships Yahweh, Jesus, Allah, Ganesha, Odin or the Flying Spaghetti Monster should be irrelevant. That is between them and their chosen deity. They all have their respective communities to worship in and edify one another. Ideally, as Americans we should be able to see that all faiths are founded on the ideals of honor, love, justice and brotherhood. Religion defined is to "re-link" both to God (however you define him or her) and to one another. As to Christianity, Jesus should be the supreme authority and he gave only two commandments: Love the Lord your God with all you are, and love your neighbor as you love yourself. When asked who is one's neighbor, he told the story of the good Samaritan. This would be like telling someone in the "far right" a story about a good Muslim. The Samaritans were hated by the Jews. So here Jesus says one's neighbors are not just those in our own communities but those who are much different as well and His followers are to treat everyone as he or she would wish to be treated. He also said his followers would be known by their love. You don't see much of these practices in the current form of Christianity. Worship however you want, but don't despise me because I walk a different path and don't try to force me to follow yours. If you believe that God will judge all at the end of time, you already acknowledge that it is His job to do so. If a conversion is to be made, that is the job of the Holy Spirit, not any preacher or teacher. The only commandment Jesus gave His followers was to exhibit love to everyone. If true Christians really wanted to follow what Christ taught, this whole argument would be a moot point, because most everyone would be drawn to their faith because of the love and true sense of community and acceptance everyone received in their presence.

-

Most beautifully stated. Thank you Katheryne Crowe.

-

A hearty second to Gary's comment. My sad observation over the years is that many people appear to confuse 'knowing (insert your) God' with BEING 'God', and an ill-behaved one, at that...

-

-

I think the gov't is meant to be secular. I think you can name lots of reasons why that is so for yourself ')

-

“Of all the animosities which have existed among mankind, those which are caused by a difference of sentiments in religion appear to be the most inveterate and distressing, and ought to be deprecated. I was in hopes that the enlightened and liberal policy, which has marked the present age, would at least have reconciled Christians of every denomination so far that we should never again see the religious disputes carried to such a pitch as to endanger the peace of society.” ~Founding Father George Washington, letter to Edward Newenham, October 20, 1792 “The United States of America have exhibited, perhaps, the first example of governments erected on the simple principles of nature; and if men are now sufficiently enlightened to disabuse themselves of artifice, imposture, hypocrisy, and superstition, they will consider this event as an era in their history. Although the detail of the formation of the American governments is at present little known or regarded either in Europe or in America, it may hereafter become an object of curiosity. It will never be pretended that any persons employed in that service had interviews with the gods, or were in any degree under the influence of Heaven, more than those at work upon ships or houses, or laboring in merchandise or agriculture; it will forever be acknowledged that these governments were contrived merely by the use of reason and the senses.” ~John Adams, “A Defence of the Constitutions of Government of the United States of America” “In every country and in every age, the priest has been hostile to liberty. He is always in alliance with the despot, abetting his abuses in return for protection to his own. It is error alone that needs the support of government. Truth can stand by itself.” ~Founding Father Thomas Jefferson, in a letter to Horatio Spofford, 1814 “That religion, or the duty which we owe to our Creator, and the manner of discharging it, can be directed only by reason and conviction, not by force or violence; and therefore all men are equally entitled to the free exercise of religion, according to the dictates of conscience; and that it is the mutual duty of all to practice Christian forebearance, love, and charity towards each other.” ~Founding Father George Mason, Virginia Bill of Rights, 1776 “Persecution is not an original feature in any religion; but it is always the strongly marked feature of all religions established by law. Take away the law-establishment, and every religion re-assumes its original benignity.” ~Thomas Paine, The Rights of Man, 1791 These are just a small sampling of what our founding fathers had to say about religion in government. We were founded as a nation of individuals, united in the belief that "all men are created equal". Most of them were children of those who came here to escape tyranny and religious persecution. Must those few once again try to use "Christianity" like some sort of club to beat the rest of us to death? Keep your faith in your heart, practice good works, love your neighbor as yourself, excellent advice just like dream it, live it and grow. Brightest Blessings.

-

https://m.facebook.com/story.php?story_fbid=1323819097710607&id=100002475883012&refid=17&ref=bookmarks&ft=top_level_post_id.1323819097710607%3Atl_objid.1323819097710607%3Athrowback_story_fbid.1323819097710607%3Athid.100002475883012%3A306061129499414%3A2%3A0%3A1491029999%3A-7741103368576968496&tn=%2As

-

-

I firmly believe that this country was founded on the idea that our founders wanted "freedom of personal faith" which doesn't necessarily mean that it was or should be predominantly Christian. This goes on to be expressed in the Constitution, "Freedom of religion" right? I am an American Muslim who in general is proud of this country (yes I was born here) but I feel that the country is falling apart with half Christians or Sunday only Christians and for that matter any religion who falsifies faith for personal gain. I hope one day that we (the people of the United States) can live together again in agreeance and love.

-

Good words. Everyone's beliefs should be respected.

-

-

Liberals need to READ the CONSTITUTION! Just like at our money and our pledge of allegiance... Jesus is right there. Great American heros like Washington, Jefferson and Reagan LOVED Jesus, something unAmerican Obama and Black Lives Matter activists seem to hate!

-

Jesus is in the Constitution? hahahah Stop- you're killing me.

-

Oh, Mr. Moleman. The US Constitution does not contain the words Jesus or Christ or God. It does state: all men are… endowed by their Creator. Not THE creator. Every man has a Creator of his choosing. (Of course, this applies to women as well.) The word God was inserted into our Pledge and printed on our money during the cold war, when we were threatened by the godless Soviet Union. In the raw, in was propaganda designed to make us feel rightious in our defense against communism. If you want to be taken seriously, please stick to the facts instead of substituting your own words for those in the documents you reference. It's akin to me calling you Mr. Rodentman in place of your actual name, Moleman, as written. I would never do that because it's not factual. And unless you are a mindreading, time traveler, you cannot not presume to know what was in the minds of Washington, Jefferson, or Reagan. Jesus was an activist and a liberal, as judged by the people he consorted with and his agenda regarding the sinners and the poor. The Pharisees and Herod's administration were the greedy and oppressive conservatives. Seems to me you should find a better expression for the word "liberal". Something that is definitively a pejorative, instead of one that is Christ-like. Your ojective is to have liberals need to read the Constitution. I have and it's easy to see you've been liberal with your use of words that don't appear in the document.

-

Les Godt, you hit the nail right on the head, and on the first try❗️ Well written‼️ It's amazing, and frustrating, to see soooo many people that come up with their own cockamamy interpretations of the facts and figures published here, there, and everywhere...thus, showing their level of cultural, intelectual, and educational limitations and incompetencies. Thank God that I have an unlimited supply of 'Patience Pills' from the 'Pharmacy of Life', which allow me to calm down and not say or write things to those people that will make me repent afterwards...?

-

-

-

Oh please. Just read the 1st amendment.

Read also "The Jefferson Bible". Note his rejection of miracles, divinity of Jesus, the resurrection as examples.

Here's a short description:"The Life and Morals of Jesus of Nazareth, commonly referred to as the Jefferson Bible, was a book constructed by Thomas Jefferson in the later years of his life by cutting and pasting with a razor and glue numerous sections from the New Testament as extractions of the doctrine of Jesus. Jefferson's condensed composition is especially notable for its exclusion of all miracles by Jesus and most mentions of the supernatural, including sections of the four gospels that contain the Resurrection and most other miracles, and passages that portray Jesus as divine And please don't try to outTrump Trump. "unAmerican Obama". Also what problems do you have with Black Lives Matter?

-

Apparently you are not paying any attention to the facts. You seem to think of "alt facts" (lies) when it comes to the founding fathers, the declaration AND the constitution. Read up and know the facts before you make a further foll out of yourself!

-

I think you meant "fool" not "foll" turbo...

-

-

The first amendment says that all religion's are allowed the freedom to to worship as you see fit ,I'm starting a church that chooses smoking marijuana to commune with the Lord as a first amendment right ,and no state or Federal law has the right to deny me that right, I am a Christian a deism,like our fore father's, but that same freedom must be extended to all other religions, even though I don't believe the same as they do.

-

Simply stated, "One Nation Under God"....God is not a religion...call what you like. Prime Creator, Mother-God, Yawee, etc...all prayers heard and we are being lead and changed as I write this message..look into each other's eyes to the souls and make that loving connection for it it LOVE to make the difference in changing this planet..not just the USA.

-

It says "In God we trust." It doesn't specify Christian god.

The U.S. was founded on the melting pot, and that's the way it was intended. People in this country have forgotten that and instead of embracing people of all religions (and yes, Islam and even the Satanists), we again try to control and dictate religion.

IF people harm each other in the name of religion, then that's a whole other story (and yes that includes Christians--KKK ring a bell?), and that's not acceptable.

We are the melting pot of the world...or at least we were designed that way. Our country was founded on the principle of seeking refuge from religious persecution and yet, what have we become?

I'm all for In God We Trust as long as you don't tell everyone which God they have to choose.

-

Yes we need God in everything we do and everything that need help with. You see what happen to a country without God. The nation goes down hill and won't stop.

-

We need to keep God in mind as a guide for everything we do. This includes government work. A system of government founded on God as creator will encourage brotherhood and love for fellow citizens. To erase all references to God in public life is to establish atheism as the de facto state religion.

-

Time and time again, "systems of government founded on God as creator" have caused conflicts. Every "tribe" has it's own god which is a cause to destroy another tribe and it's god. This is true of nation states, as well as tribes in the remotest jungle. There is no acceptance of a monolithic god, so belief in god cannot bring harmony to all of humankind. The self-righteous religious leaders, be they christian, muslim, jew, or the sun-woshipping tribe who are prone to invoking god for a blessing when going to war, most often ceate conflict to enhance their own power and reap the benefits of such. TV evangelists make war on Satan to acquire wealth. Keep your god in your head, your home, and in the company of like believers. And try not to make a pest of yourself. And I will do the same.

-

I totally agree with your response to the reverend. Thank you for writing it so well. To erase all references to God in public life is not to establish atheism as the state religion, but to let every one's religion or non-religion be equal in the eyes of the state. That is the brilliance of our forefathers when they wrote the Constitution after escaping from state religion and what makes the United States safe and welcoming for everyone.

-

Belief in God is not enough. Actions are more important than beliefs. Conflicts come from people whose actions are not founded in love, not from belief in God. Our actions should support our beliefs. Just because some have used religion as an excuse to further selfish ends does not mean that we should ignore all spiritual experiences. If you do not believe in God, ask yourself, what is the origin of all the matter and energy in the universe. To deny God is a leap of faith that I just cannot make. We are here for a purpose, which is connected with God as creator. If a belief in God pesters you, why are you a minister?

-

Rev., I think it would be wise to remember that the Universal Life Church and its ministers are patterned from a Christian template, with legal and official terminology, as not all of us are even religious in the strictest sense. I understand that the common conception, backed by a dictionary definition of "minister" disagrees with that, but here we hold a more inclusive idea of "Reverend" and "Minister". I hold a polytheistic animistic non-dualistic view, so to hear the suggestion that I'm a minister so long I hold that "God as creator" does seem to fly in the face of what this gathering is about, given the inclusiveness and variety of "ministers" ordained and present. This, I think, is the kind of American organization I can get behind - what I want to see of American culture. I am hoping this too is Love.

-

You are right, that ULC has a very inclusive scope of those who can become ministers without the requirement to adhere to a particular dogma. This is one of the features that attracted me to it. Other online church groups require a belief in Christianity, which I find too narrow. Even though the structure seems to have a significant contribution from Christian traditions, the website includes many other religions, a feature that I find refreshing. We can be inclusive and tolerant of each other's belief systems and even learn from them. Love is our guiding light. I certainly do not mean to imply that you have to accept the Christian view of God as creator as a prerequisite to becoming a minister because that would be a dogma. However, most people who request ordination believe in some form of a higher power, which I call God, more so than those who do not. Peace to unto you and best of success.

-

-

-

-

-

People would not really want to live in a Christian nation, though some believe they would. Jesus was very clear that his followers should not resist violence, but turn the other cheek. He took thou shalt not kill to the extreme and called on others to follow. He said live and do as I did, and then allowed himself to be tortured and killed. He rejected defending himself and warned others that following him was very difficult and most wouldn't want to do it. Guess he called that right by all the guns in this country.

-

It seems at it simplest that the word God encompasses ALMOST all possibilities and potentialities... it seems to me, that the expression is meant to be one of inclusion of belief, not an exclusion or a singling out of any one faith as the "right" faith... it doesn't say "in Christ we trust" or "in Allah we trust"... it implies all

-

ALMOST?? What possibility or potential would you exclude? Some athiests say they hust have just not found the right god. Does having no god, but living a good (and everything it implies) life preclude one from attaining the promised land?

-

-

I prefer all religions to stay out of government. To dispense justice unevenly because one does not belong to the "dominant" religion is EVIL. period

-

Church and state should be 100% seperated; period! One should not effect the other in any way.

-

Amen!

-

-

America has separation of church and state, as it should. Furthermore, the founders were not Christians and, in fact, made many disparaging remarks about Christianity. We are NOT a "Christian" nation but one of many diverse religions, none of which should be allowed to affect our government, as the government allows for the freedom to follow whichever one chooses.

-

First of all, there is no law stating that this is a Christian Nation, but there are laws that either state it clearly (Treaty of Tripoli) and laws that forbid it (1st Amendment) and laws that imply that it is not (Article 6 of the Constitution). Over all I think that make it clear that this is, according to law, not a Christian Nation.

Second, sans law, what would make us a Christian Nation? Being a Nation that follows the Teachings of Christ might. But every person I know who believes this is a Christian Nation also backs politicians and positions that would take aid away from the poor, whereas Jesus told us to feed and help the poor. For the most part they also have claimed that their positions against abortion and gay marriage are based on their Christian Faith, even though Jesus never said anything on these 2 subjects. They are against helping refugees, which goes against Jesus teachings ("what-so-ever you do unto the least of these you do unto me.")

The question in the end, is not whether this is a Christian Nation, but whether the majority of people in this country calling themselves Christians, are, in fact, Christians at all.

-

Interestingly, the precepts that Jesus preached are totally Jewish coming from his Jewish tradition. Jews don't believe that everyone has to be Jewish. Just be the best human beings you can be no matter what religion you are. Basically, do unto others as you would have them do unto you.

-

-

Built into the constitution is religious freedom. Built into Christianity is evangelism, the neccessity of bringing the heathen to Jesus "for their own good". The conflict between these two concepts is why christians see their religious freedoms stifled when the government will not pass law which make everyone defacto christians. Religious opinion has no business dictating government policy. Just sayin'.

-

Separation of church and state is of benefit to religions.

The tendency of politicians throughout history has been to use religion to enforce oppressive policies. So I would advance an addendum to your point: political convenience has no business dictating religious practice. Historically, I think that this is the greater concern, and the trail of "moral majority" money into our political system suggests that it may be the case still today.

-

-

Is it still "In GOD we trust?" I thought that it had changed to "In .gov we trust."

More importantly: If Jesus was actually around and people honored him enough to offer him the Oval Office, then we wouldn't need the Oval Office. We'd just quietly go around taking care of each other, and planning a future that allowed our descendants to do the same..

-

Can anyone define a 'Christian'? A follower of Christ? In how many ways does one have to follow Christ's teachings and examples to be a real Christian. 5? 10? More? The semon on the mount is said to be the gist of his advice to achieve salvation. Is it enough to claim to be a Christian, if you adhere to these exhortations? Anyone want to say why he/she claims to be Christian? Is believing in the 'Old Testament' a prerequisite to being Christian'? Science denial aside, if intelligent life is found on any of the thousands of exo-planets, are we to expect an exo-Christ and exo-Christians? Is free will necessary to be a Christian? That is, does one have to choose to be a Christian? Or does baptism into a Christian church as an infant mske one a Christian. Wven though the infant has no knowledge of Christ? What is the litmus test for entrance into Christianity?

-

There are a number of criteria advanced, but I believe they all have their root in this: God is love, and we manifest our greatest power when we act in love. This was demonstrated by Jesus of Nazareth and his works. His entire ministry was devoted to one thing: breaking the bondage of the theology of sin that convinces us that we are not worthy of love and the power it brings - or conversely, to give us faith in our worthiness to receive those gifts.

The measure of our worthiness is the service that we provide to love in loving one another and the world that sustains us.

This teaching is not unique to Christianity. To be Christian, then, is to tender admiration particularly to Jesus for the discipline of his commitment to love - a commitment that ended with his death at the hands of those he came to serve. Through worship, that strength has been amplified psychically through the centuries, until now it is a pillar against which Christians can rest when they are weary.

-

-

-

USA an officially christian country? Certainly not. Impossible, given our Constitution.

And as for all the comments here about democrats & liberals, well, maybe this isn't the country for you.

-

One of the great ironies of our history is that the Pilgrims actually founded this land on the basis of freedom of religion. Those people are the Americans who pulled off the Salem debacle, kicked out or killed Jews, made Quakers unacceptable, and reviled people who were of the Church of England. DO NOT ASSERT THAT WE WERE FOUNDED BY CHRISTIANS. These people were as segregated religiously as any I know of.

You should read the Jefferson Bible. He took out everything but what Jesus said. That was his religion. A truthful morality.

We need to grow up and stop labeling and judge only by individual morality. And you can't know that without KNOWING someone. And then is judging really OK?

I am truly tired of our society struggling with this nonsense. Either we are good people or bad people. Even criminals are not asked what religion they are. Think about that.

-

Religion belongs at home and in faith communities, not in government - especially not in a culturally diverse society. No religious minority should ever force religious-based legislation. Ethics are higher than morals, and should guide our leaders. Morals vary, where ethics are based on reason. Fundamentalists are driven by extreme emotions, highly volatile and dangerous, no matter which religion. They do not lead/govern, they dictate.

-

While may citizens are Christian, this country, since it's founding, has never been a Christian country. The contract that establishes it, and it's contract to the world, the US Constitution, makes that point clearly. How is there even a question?

-

I love how my fellow Christians complain about persecution. There are many multiples of Christian churches in every town and city in this country and a lot out in the middle of no where. The only thing being objected to about Christians in this country is how a great many of us want to push our beliefs onto everyone else. That is not persecution, that is liberty and justice for all.

-

Some excellent points have been made here. I believe we were intended to have freedom of and from (if we choose) religion, and in separation of church and state. I also think those concepts have been eroded a bit since corporations gained personhood.

-

Our money says 'In God We Trust'. God is not a religion. We sometimes get caught up on labels.

-

We are definitely NOT! a Christian Nation, anymore than we are a Buddhist Nation, or a Mohammedan Nation, or a Hindu Nation, etc. Such labels foment unnecessary divisions among people, sometimes to include hostilities, and that's no way to worship God, or Mohamed, or Buda, or Brahma, etc. After all, everybody is reaching to God, no matter what name you wish to give to such Deity.

-

Secularism is for grownups.

-

Rev. Lockett: of Share Care Prayer Ministery: I grew up with Jewish people and Christians..in America... Thro they were many folks from England Germany, Ireland, They all spoke English, since they came to America, not today..You never know what they are saying, I believe since they are in America, they need to speck English...We do when we go to there countries we have a little book with langauge in it. Today we translate threw cell phone...Along with worship...America is a Christian Nation...Well now they take down our Nativeities, build up there places of worship, When we all worship the same Father in Heaven...You do yours, we do ours. Simple. You came here, we didn't go to your Country to live. AMEN. ,

-

Just a question for all of you theologians out there, can you tell me why every time I see ANY discussion of religion it is always smashed together with politics? Could it be, just an observation here, that historically religion has its' birth in politics? Hmmm? Careful with your answer on this one, I have 2000+ years of evidence to support this.Way beyond our understanding and before anything we consider as modern science and medicine there was faith. Faith was used to unite a population, to control its' behavior, and ensure the survival of the ruling party. That is not made up but a simple fact. Fear of a greater power was used basically to enslave the general thought process of the population, thereby controlling the everyday events giving the ruling party validation of their right to control. Fast forward to today. We know now that blindly following anything to the level of fanatic is dangerous and has always lead to destruction. Look at the middle east as an example. Even with all the historical evidence, majorities of the populations still blindly enslave themselves to fanatic level beliefs thereby keeping themselves in the stone-age. Women have no rights, children go uneducated, services in some areas such as clean water are non-existent, and medicine is frightening. The point is, every government in the world was founded on a religious belief, including America. America has chosen to try and protect itself from fanaticism by giving its' citizens freedom FROM religion. But in just about every historical document you can find the word...GOD. The question is simple, is America a christian country? As long as we continue to separate church and state, as long as we have freedom from religion, then the answer is no. The question was not about how America was founded, but what it is. Peace to you all.

-

My answer to your response is short and to the point..."Thank you."

-

As far a Jesus knew: You sailed past Spain - You fell off into the void! The earth is flat. Jesus didn't even know America existed. He only knew Spain existed because the Romans had it. And if it wasn't for the Romans we would never of heard about Jesus! Think about it.

-

Since there is a strong doctrine of separation of Church and State in the U.S. Constitution, I doubt that U.S. Currency is a "Christian" currency. It can, however be used to buy "Christian" products.

-

Regarding whether or not the US is a Christian Nation, I believe historically it has been because christian religions have been the majority religion among our population but the Government should not be partial to any religion only uphold the religious freedom doctrines of the Constitution.

-

It's a fairly well known fact that many of the founding fathers were actually Freemasons, which is a group that the Catholic church often condemns. It is also known by historians that George Washington for example, NEVER identified as a Christian and would actually circumvent any questions regarding whether or not he was Christian. In fact, he was known to get up and walk out during Sunday services until he was reprimanded for doing so, after which he promised never to walk out again. A promise which he kept simply by never going back to church again. Undoubtedly, the Christian faith had SOME influence on the founding of America, but it is also clear that Freemasonic ideals had just as much, if not more, influence. Regardless, it is dangerous to have an official or even unofficial American religion. We are not, nor should we ever be, a theocracy.

Article 11 of the Treaty of Tripoli states that "the Government of the United States of America is not, in any sense, founded on the Christian religion." This does not apply to the people of the US, but it does apply to the government. Thomas Jefferson promoted tolerance above all and said earlier that his statute for religious freedom in Virginia was “meant to comprehend, within the mantle of its protection, the Jew and the Gentile, the Christian and the Mohammeden, the Hindoo and Infidel of every denomination.” He specifically wished to avoid the dominance of a single religion. The Declaration of Independence states that “to secure these rights, Governments are instituted among Men, deriving their just powers from the consent of the governed.” There is no mention of powers being derived from a supreme being. A war had been fought against a ruler that claimed his power by divine right, the founders had no intention of that situation ever darkening freedom's door. The Federalist Papers are also clear, religion is mentioned only in the context of keeping matters of faith separate from concerns of governance, and of keeping religion free from government interference. We are a nation of many faiths, and most assuredly not a nation of a single faith.

Nope‼️ Each one has a separate AND appropriate place to hang out at...the key word is SEPARATE❗️ Zealous religious people of one sect or another are a threat to society in general because they want to push their own brand of beliefs down my throat, no matter what, and don't care to respect my own. So ???.

The Government of United States of America.............MENT To comprehend with in the mantle of it's protection, the JEW AND THE GENTILE. Mostley in our U.S. There are Christians, and Jews...My Question to all of you, remember the picture of Thomas Jefferson taking the OATH of OFFICE to become President,,,His Hand ON the"" Bible"".....Thank You very much...Amen.....Minister Barbara Lockett...

Grasping at straws, and taking social convention of the day to be equal to proof of devoted religious commitment to a particular religion goes way beyond logical fallacy, into the realm of BS.

Absolumundo! [I don't know if that's fake Italian or fake Spanish.]

Barbara Lockett...how in the world do you know it really was a Bible? Where you there? Did some religious fanatic wrote that it was the Bible on History books? What if it was the Book of Freemasonry? What if it was any another solemn book other than the Bible? You can only speculate and assume that is was a Bible...and you know what happens when people "assume". Please, expand your 'horizons' and accept that there are other VALID points of view that yours. Have a great life!

Nationally is Racial and so-is being born - racial and so getting a haircut, racial!!! ONLY "birds of a feather - flock together!!!

I cannot comprehend HOW just such a few folks cannot comprehend - Creationism!!! Until HIS Creationism is understood - there exists no platform of discussion!!! Can no one understand that over the past 2000 years - man has changed every that man 'could change'

Don't you people know that skunks don't sleep with foxes!!! No wonder that Americanism is nothing but a sinking-ship and we have nobody to blame but our own selves!!! It's always been this way!!!

When you are willing to admit that you need a teacher - contact me!

Should I ever need a teacher, I will look for one who can write comprehendable English. The post above is a mess, including what I can make out of the thinking behind it.

The First Jew has-never been born - no one has ever heard of him!!! "we all" know who the first: Jacobite, Edomite, Mobite and Almonite were but - not one of us has-ever heard of "the first Jew!!!" He never made entry into The Bible!!!

Yes - man-made labels were stuck on people, places and things in the very late 1770s - by scholars!!! However - the first Jew was not any one of those labels!!! When you absolutely cannot find - the first Jew - please contact me Ron - Foreveragoodguy7

Thank you, and very well said, I think all should slow down and not over think it !!!!! Just believe what you will, the rest will work it's self out, for now anyway.